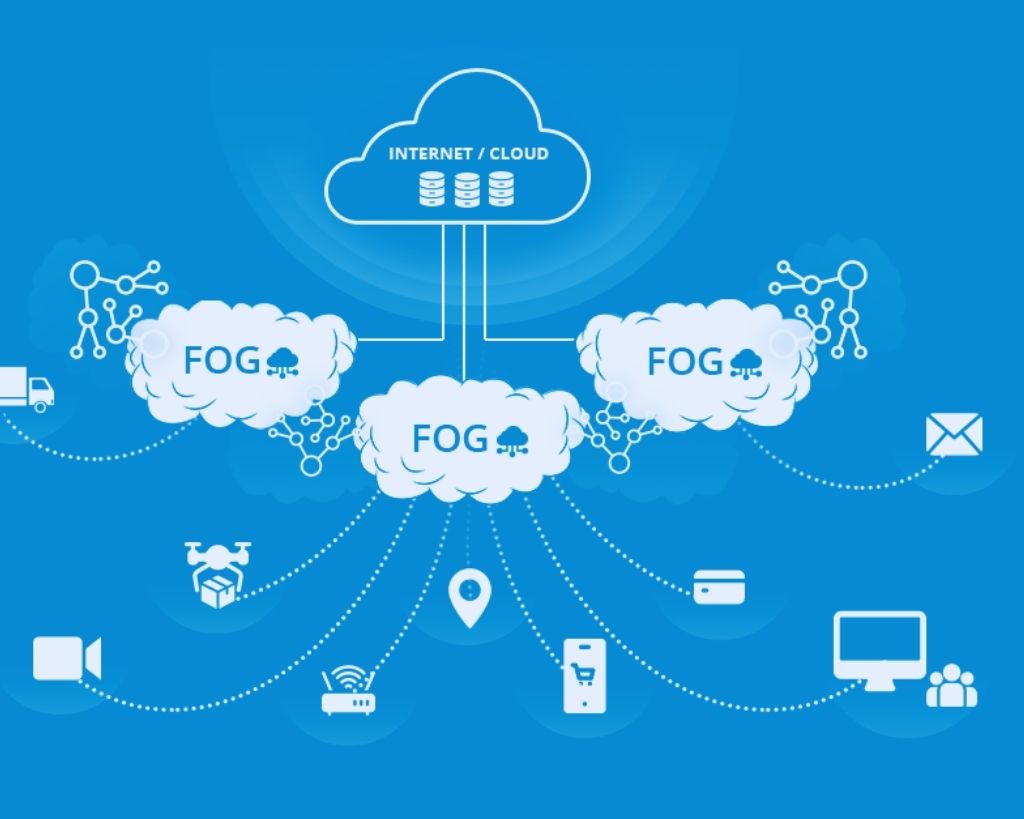

With Fog Computing, the vision of cloud computing and its software-as-a-service concept for the shop floor can become a reality. The first step is that MES functions are encapsulated via a fog layer, enabling efficient data exchange even with low bandwidth.

A fog layer condenses the data before it is loaded into the cloud. In this way, latency times can be shortened, and costs saved.

The fog around cloud computing is clearing. Almost every major manufacturing company sets cloud computing as a strategic IT goal. Still, it is often unclear how a corresponding software-as-a-service concept can be achieved in practice using business cases. As a first practicable step, Proxima Software from Ebersberg near Munich has now encapsulated its MES functions with a so-called fog computing layer to divide the path to cloud computing into economically attractive stages.

The term fog computing, also known as local cloud, is derived from fog, comprising a network structure (fog layer) in which data generated by end devices (edge devices) is not loaded directly into the cloud for processing but is initially preprocessed in a decentralized manner. In this way, the data streams, for example, from the PLC controls (PLC = programmable logic controller) of the machining centers, are analyzed on-site in a resource-saving manner, and only relevant data extracts are sent to the cloud.

Also Read: The Way To Intelligent Data Management In The Cloud

Background: Often, the bandwidth is not available, or the connection is interrupted for a short time. It is impossible to send large amounts of data reliably to an external data center (cloud). A fog layer prevents this by acting as a buffer.

Higher Data Security With Low Latency Times

The basic idea of the Fog Layer is first to compress data and only then send it to the cloud. This has several advantages: Not only are long latencies avoided, but costs are also saved. Most of the business models related to cloud computing provide revenue models based on transactions – it is not the data processing that goes into the money, but the transfer of data back and forth.

Therefore, sending large amounts of unprocessed raw data to the cloud is an expensive undertaking.

The issue of data security also plays a crucial role: the data must be encrypted because it is exposed to hacker attacks when it is transferred to the cloud.

The fog computing concept used by Proxima takes this aspect into account because the semantic description of the data, the so-called metadata (descriptive, structural information of the raw data), is located in the “Proxima MES,” in the so-called fog layer, and is thus separated from the raw data.

This separation serves the necessary data security requirements of production companies. The metadata, which is particularly sought after by cybercriminals, is stored remarkably protected and thus withdrawn from unauthorized access from outside.

Fog Computing As A Future-Proof Basis For The Cloud

The path to software-as-a-service is mapped out in cloud computing: In the first step, the compressed data must be transferred to the cloud before applications such as analysis tools can work in the cloud. Creating cloud applications that use local data; would be far too expensive.

On the other hand, the cloud can show its strengths when accessing evaluated data from different locations. As part of the further development of its system architecture, Proxima MES decouples

component by component from the on-premises architecture and makes it available as SaaS. This is to make the system provider’s software portfolio secure for the future.